This issue brings together several reflections on where artificial intelligence (AI) stood in medicine at the end of 2025, along with deep dives into AI’s implications for public health and resource use. We also highlight global policy shifts that could move AI development toward a less regulated landscape, alongside resources aimed at integrating AI responsibly into health care systems and into medical and genetic counseling education.

This month’s reading also explores emerging risks for health care professionals, including legal liability when clinicians override AI recommendations, the potential for deteriorating workplace conditions linked to AI deployment (and how to fight back!), and gaps in international coordination to address AI-enabled bioterrorism.

As always, we feature developments at the intersection of genomics and AI, along with educational resources and upcoming events — whether you are beginning to explore AI or are making strategic decisions about its use within your organization.

Editor: Katya Orlova, MPH, CGC, PhD

Contributing Editors: KT Curry, MS, CGC, Marlena Ahn, MGCS, LCGC, Ping Gong, MS, CGC, Lara Sucheston-Campbell, PhD, MS, Amy Lemke, PhD, MS

Subscribe to the AI/ML Email List for Updates

NEWS & OPINION

THE MOMENT AI ARRIVED IN THE CLINIC: INSIGHTS FROM THE SAIL 2025 YEAR IN REVIEW

Elias et al, 2025

2025 marked a turning point where AI shifted from experimental pilots to widespread clinical adoption. This critical overview of the hype vs. tangible results of incorporating AI in medicine examines six key areas defining 2024-2025 AI. The focus is on assessing meaningful clinical and operational impact and performance, e.g., ambient scribes reducing burnout, alongside persistent challenges, safety and necessary regulatory oversight.

Tags: Overview, Medicine, ELSI

CONSTRUCTION AND CONSEQUENCES: THE HUMAN IMPACTS OF ARTIFICIAL INTELLIGENCE DATA CENTERS

UAB Institute for Human Rights, 2025

AI data centers pose public health risks, and these risks are often concentrated in rural, low-income and historically marginalized communities. These facilities use enormous amounts of energy —the power usage of these data centers is projected to rise to nearly 2967 trillion watts per hour by 2030. Much of this energy is powered by fossil fuels, which contribute to air pollution associated with risk of cardiovascular disease, cancer, respiratory disease and issues during pregnancy and child development, in addition to broader climate change-related impacts. Data centers also rely on backup diesel generators to maintain operations during grid disruptions, which release nitrogen oxides (NOx), fine particulate matter (PM₂.₅), and black carbon. The air pollution impacts on respiratory-related health are estimated to cost up to $20 billion per year in the United States by 2028.

Tags: ELSI, Medicine, Public Health

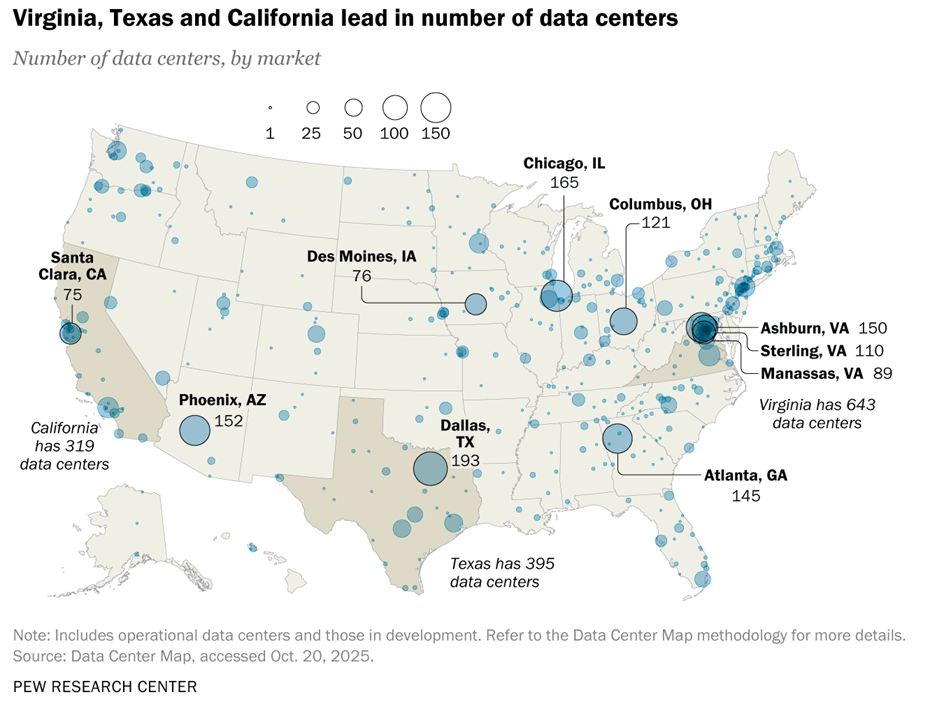

WHAT WE KNOW ABOUT ENERGY USE AT U.S. DATA CENTERS AMID THE AI BOOM

Pew Research Centers, 2025

This article shares a deep dive into data centers in the US, including quantity, location, energy use, energy sources, impacts on American electricity bills, and American opinions on AI impacts on the environment. A key quote: “U.S. data centers consumed 183 terawatt-hours (TWh) of electricity in 2024, according to IEA estimates. That works out to more than 4% of the country’s total electricity consumption last year — and is roughly equivalent to the annual electricity demand of the entire nation of Pakistan.”

Tags: ELSI

COLLEEN CALESHU, MS, CGC, ON WHETHER AI WILL REPLACE GENETIC COUNSELORS

CGTlive, 2025

The senior director of research and real-world data at Genome Medical, Colleen Caleshu, MS, CGC, discussed multiple perspectives on the future of genetic counseling in the age of AI. Caleshu stated, “many people who need genetic counseling don’t currently have access to it, and that not everyone who needs a genetic test necessarily requires full counseling from a genetic counselor.“ AI tools, she suggested, could help fill access gaps by supporting patients whose needs can be met through technology while ensuring that those requiring complex, personalized care still receive it from human counselors. This is the third part of the interview; see also the first and second parts. (1 min 43 s)

Tags: Genetic Counseling, ELSI

AI VENDORS HAVE A LOT TO OFFER — INCLUDING RISKS

Healthcare IT News, 2025

- This article shares an interview with a nurse expert on artificial intelligence, Betsy Castillo, RN, who advises hospitals and health systems about potential hazards when working with AI vendors. She described the questions to ask those AI vendors to assess whether they really understand health care.

- For example, she recommends asking, “Who helped design and validate this system? If clinicians, abstractors and data stewards were not part of the process from the outset, the product may not withstand the demands of a live healthcare environment,” and “How does the system handle ambiguity?”

Tags: Medicine, Public Health

MARK ZUCKERBERG AND PRISCILLA CHAN RESTRUCTURE THEIR PHILANTHROPY

NY Times, 2025

- Mark Zuckerberg and Priscilla Chan are restructuring the Chan Zuckerberg Initiative (CZI) to focus almost exclusively on AI-driven biomedical research, aiming to “cure or manage all diseases by the end of the century.” The philanthropy is shifting away from social policy (programs in areas like social advocacy, immigration reform, criminal justice and DEI) and education to AI-powered biology, significantly expanding the Biohub network.

- Specific projects include a virtual cell mapping platform, a large language model that can perform biological reasoning and AI that analyzes genetic sequences to detect disease. Most recently CZI partnered with IGI to create the Center for Pediatric CRISPR Cures.

- The Center will use CRISPR-based editing technology to advance cures for severe pediatric genetic diseases and will bridge CRISPR cure design and testing at the University of California, Berkeley (UC Berkeley) with clinical treatment at the University of California, San Francisco (UCSF).

Tags: Medicine, Public Health

A CALL FOR A GLOBAL CYBER BIOSECURITY FRAMEWORK IN GENOMICS

Joly et al, 2026

Rapid advances in AI and biotechnology, coupled with geopolitical tensions, increase the risk of misuse of genomic data for harmful purposes. Fragmented and reactive governance policies could increase the risks of dual-use genomics, undermining international collaboration and data security. This Comment calls on the international genomics community to meet to establish robust, harmonized standards to safeguard genomic data.

Tags: Public Health, ELSI

MOST-READ: THE STANFORD HAI STORIES THAT DEFINED AI IN 2025

Stanford University: Human-Centered AI, 2025

These stories, ranked by readership, capture a year when society began reckoning with AI not as science fiction, but as the imperfect, consequential technology reshaping mental health, childhood safety, workplace dignity, privacy and geopolitical power. Special mention for the article, “AI Index 2025: State of AI in 10 Charts.“

Tags: Medicine, ELSI, Chatbot

RESEARCH

SPECIAL ISSUE: AI IN GENETIC COUNSELING FROM THE JOURNAL OF GENETIC COUNSELING

JoGC, 2026

JoGC, 2026

This virtual issue includes all 12 previously published articles (2019-2026) on AI/ML and genetic counseling. Recent article of note

- CROSS-SECTIONAL INVESTIGATION INTO EARLY USE OF CHATGPT IN EDUCATION AMONG GENETIC COUNSELING STUDENTS AND FACULTY

Ahimaz et al, 2026

Ahimaz et al, 2026

- Genetic counseling students (n=99) and faculty (n=62) were polled on how they use ChatGPT in July-Aug 2023, which overlaps best with use of the ChatGPT v3.5 model. Table 3 contains how faculty used ChatGPT, and table 4 lists how GC students used ChatGPT during graduate training. The possibility of incorrect information being provided by ChatGPT was thankfully well-understood by both students and faculty, and both groups — even in 2023 — acknowledged the inevitability of integrating AI into education. Faculty fears included students being hindered in developing writing skills and possible dependency. Today in 2026 most students use AI tools; therefore, questions of how to thoughtfully integrate AI into graduate GC education are even more urgent.

Tags: Genetic counseling, Education, ELSI, Chatbot

INTEGRATING ARTIFICIAL INTELLIGENCE INTO MEDICAL EDUCATION: A NARRATIVE SYSTEMATIC REVIEW OF CURRENT APPLICATIONS, CHALLENGES AND FUTURE DIRECTIONS

Ahsan, 2025

Ahsan, 2025

- This article shares a narrative review of 14 studies exploring how AI is being integrated into undergraduate, postgraduate and continuing medical education programs.

- Wide range of applications were found: diagnostic assistance, curriculum redesign, enhanced assessment methods and streamlined administrative tasks.

- Challenges: ethical dilemmas, the lack of validated curricula, limited empirical research and infrastructural constraints that hinder broader implementation.

- There is a need for: well-structured AI curricula, targeted faculty development, interdisciplinary collaboration and ethically sound practices.

Tags: Overview, Education, ELSI

GENERATIVE ARTIFICIAL INTELLIGENCE IN MEDICINE

Teo et al, 2025

This already well-cited review article discusses genAI use within medicine, including recent technical advancements in clinical decision support, image analysis, research and other tasks. Approaches to validation and specific challenges are also discussed.

Tags: Overview, Medicine

EMBRACING THE FUTURE OF MEDICAL EDUCATION WITH LARGE LANGUAGE MODEL–BASED VIRTUAL PATIENTS: SCOPING REVIEW

Zeng et al, 2025

- This is a review of 28 studies, most published in the last two years

- Technologies to present virtual patients include: social robots, virtual realit, and mixed reality

- The evaluation of LLM-based virtual patients mainly emphasizes user experience. However, evaluation methods lack standardization, and only 13% (3/23) of studies used validated tools in assessing LLM-based virtual patients, while only 21.7% (5/23) of studies objectively measured learning outcomes facilitated by LLM-based virtual patients.

- All included studies expressed a positive attitude toward LLM-based virtual patients; however, they overlook privacy and security considerations in practical applications.

Tags: Overview, Chatbot, Medicine

RESEARCH HIGHLIGHT: AI IDENTIFIES PROMOTER VARIANTS THAT ALTER GENE EXPRESSION

Faial, 2025

Most patients with genetic conditions remain without a genetic diagnosis; in part this is due to disease caused by noncoding variants. Interpreting the functional consequences of noncoding genetic variants remains a key challenge in genomics. The paper describes the development of a deep neural network, named PromoterAI, that specifically identifies promoter variants that are predicted to affect gene expression. Authors also functionally validated the potentially clinically-relevant variants, and estimate that promoter variation accounts for 6% of the genetic burden associated with rare diseases. Research article: Jaganathan et al., 2025.

Tag: Disease Prediction

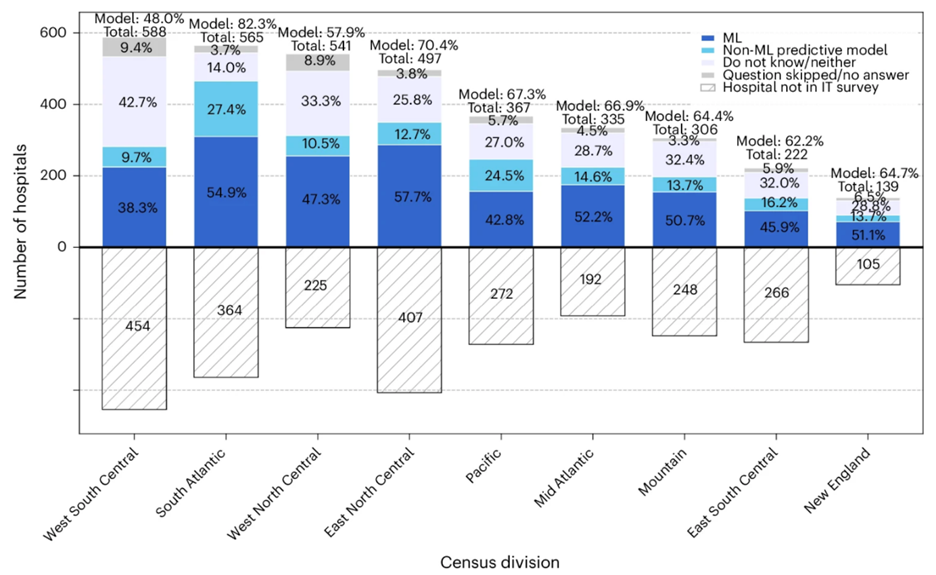

THE LANDSCAPE OF AI IMPLEMENTATION IN US HOSPITALS

Hwang et al, 2026

Hwang et al, 2026

- Uneven adoption and implementation of AI in hospitals can reinforce existing care gaps and inefficiencies

- Article analyzed data from 3,560 US hospitals using the 2023 American Hospital Association (AHA) Annual Survey, the 2023-2024 AHA Information Technology Supplement, community-level socioeconomic indicators and the 2023-2025 Centers for Medicare & Medicaid Services hospital quality metrics.

- There is an uneven and context-dependent implementation of AI tools in US hospitals

Tags: Overview, Public Health, Medicine, ELSI

AI AGENT IN HEALTH CARE: APPLICATIONS, EVALUATIONS AND FUTURE DIRECTIONS

Zhao et al, 2026

- This review traces the historical evolution and core characteristics of AI agents, and systematically examines their applications in assisted diagnosis, clinical decision support, medical report generation, patient-facing chatbots, health care system management and medical education.

- Authors analyze existing evaluation frameworks, focusing on key dimensions and performance metrics, and propose critical directions for future development: integration with embodied systems, hybrid expert models, expanded evaluation paradigms, safety and controllability assurance, ethical governance and user trust, and guidance for evolving roles of healthcare staff.

- This review aims to offer a comprehensive perspective on the development and implementation of AI agents in healthcare, providing theoretical support for future research, practice, and governance.

Tags: Overview, Medicine, ELSI

EDITORIAL: CLINICIAN, PATIENT AND ORGANIZATIONAL PERSPECTIVES ON AMBIENT AI SCRIBES

Bakken, 2026

Bakken, 2026

- The editorial reviews five recent studies on ambient AI scribe technologies in health care, focusing on clinician experiences, organizational deployment, patient attitudes and documentation quality.

- Ambient scribes can reduce documentation time, lower cognitive burden, and improve efficiency and patient engagement, though concerns remain about note accuracy, subspecialty and setting customization, and loss of clinician voice.

- A large health system case study found enterprise-wide deployment feasible, with substantial clinician uptake but barriers related to workflow, note quality and concerns about recording visits.

- Patient survey data showed low awareness of AI scribes and mixed acceptance, with privacy concerns reducing willingness to adopt the technology.

- The editorial also highlights new work on automated detection of stigmatizing language in electronic health records and raises the open question of whether AI documentation tools will reduce or amplify such language in clinical notes.

Tags: Medicine, ELSI

REGULATION, POLICY, GOVERNANCE

SENATE PROPOSAL AIMS TO ESTABLISH AI ‘OVERRIDE’ BUTTON IN HEALTH CARE

Hansen, 2025

Proposed legislation will require artificial intelligence systems used within health care settings to have an option to override decisions made by AI to maintain human judgment in health decisions. It also requires that the identity of clinicians who use the override option is kept anonymous, puts in place whistleblower protections for those who report violations of the law, and protects those who decide to override the AI. The bill also requires every hospital or clinic that uses AI to make patient-related decisions to set up an internal committee to oversee how those systems are used. As of 2025, there was no federal legislation passed by Congress that specifically regulates the use of AI in health care settings.

Tags: Medicine, ELSI

PODCAST: MEDICAL AI AND CLINICIAN SURVEILLANCE — THE RISK OF BECOMING QUANTIFIED WORKERS

Cohen et al, 2026

Cohen et al, 2026

Interview with lawyer and ethicist I. Glenn Cohen on the professional implications of the use of artificial-intelligence-based monitoring systems in medicine. Many AI innovations could benefit patients and clinicians. Yet medicine should heed lessons from industries in which AI adoption has resulted in reduced autonomy for workers and inferior working conditions. Cohen’s perspective article addresses how health care workers can push back against these risks, with the most promising tactics being to have a seat at the table, naming and shaming the hospital systems / payors / malpractice insurers that are being invasive, collective bargaining and introducing a shared governance approach when these AI tools are introduced. The podcast is free to listen (7m 17s), but the accompanying perspective article is behind a paywall.

“There is a lot that worries me, but the incentives are number one. What gets built is a function of what gets paid for. We may be giving up on some of what has the highest ethical value, the democratization of expertise and improving access, for lack of a business model that supports it. Government may be able to step in to some extent as a funder or for reimbursement, but I am not that optimistic.”

Tags: ELSI, Public Health

WEBINAR: AMERICA’S WORKFORCE: LEARNING AND WORKING IN THE AGE OF AI

Bipartisan Policy Center, 2025

Bipartisan Policy Center, 2025

Discussion with industry experts unpacking AI’s impact on the workforce and education sector, the skills learners and workers need to succeed in an AI economy, and what policymakers can do to maximize the benefits of AI for learners, workers, educator, and employers alike. The webinar features speakers from LinkedIn, SHRM, Pearson and the Bipartisan Policy Center who specialize in labor economics, workforce policy, education and human capital. (59 mins)

Tag: Education

US WITHHOLDS SUPPORT FROM MAJOR INTERNATIONAL AI SAFETY REPORT

Time, 2026

Time, 2026

- The second International AI Safety Report found that current risk management techniques are “improving but insufficient.”

- Guided by 100 experts and backed by 30 countries and international organizations including the United Kingdom, China and the European Union, the report is meant to set an example of “working together to navigate shared challenges.” But unlike last year, the United States declined to sign on to the report.

- While some questions remain divisive, "there is a high degree of convergence" on the core findings, the report notes. AI systems now match or exceed expert performance on benchmarks relevant to biological weapons development, such as troubleshooting virology lab protocols. There is strong evidence that criminal groups and state-sponsored attackers are actively using AI in cyber operations.

- Rather than propose a single fix, the report recommends stacking multiple safety measures.

Tag: ELSI

EVENTS

MAYO CLINIC AI RESEARCH SUMMIT — A NEW ENGINE FOR REAL-WORLD EVIDENCE GENERATION: MULTI-AGENTIC AI AND SIMULATION

Mayo Clinic, 2026

- Conference focused on multi-agentic AI and simulation.

- Multi-agentic AI uses teams of specialized, autonomous AI agents to solve complex, multi-step tasks by collaborating, delegating and communicating, rather than relying on a single, overburdened AI model. Through simulation frameworks, these models can move beyond analyzing what has been observed to reasoning about and generating evidence for scenarios that have not yet occurred.

- June 4-5, 2026 in Rochester, Minnesota.

- Virtual attendance available.

- Early registration rate: $425, early student registration rate: $300.

Tags: Education, Medicine

TOOLS & TIPS

CLAIMS DENIAL NAVIGATOR

Microsoft, 2025

More than 700 rural hospitals across the United States are currently at risk of closure due to financial hardship; this freely available AI-enabled tool helps hospitals address denied claims more efficiently and receive appropriate reimbursement.

Tags: ELSI, Medicine, Insurance

PubMatcher: A WEB APP TO SUPPORT GENOMIC DATA INTERPRETATION THROUGH SIMPLIFIED BIBLIOGRAPHIC RESEARCH.

Marin et al, 2026

Marin et al, 2026

- Genomic sequencing advancements have led to an explosion of data, making the interpretation of variants in lesser-known genes a day-to-day challenge for geneticists

- PubMatcher simplifies bibliographic research for multiple genes at once and grants quick and easy access to relevant gene information, and helps identify potential genotype-phenotype associations using PubMed complemented by additional data. By significantly reducing analysis time, PubMatcher supports the interpretation of novel or under-documented genes.

- Freely available for academic and non-commercial use, PubMatcher is a user-friendly and efficient solution for researchers, clinical scientists and clinical geneticists working on pan-genomics analyses.

Tag: Genetic Testing

RESOURCES

WEBINAR SERIES: AI SKILL BUILDING FOR MEDICAL EDUCATORS

AAMC, 2025

Tags: Education, Medicine, ELSI

FREE COURSE: THE SCIENCE AND IMPLICATIONS OF GENERATIVE AI

Harvard Kennedy School, 2024

- Introductory course on genAI and its intersection with public policy, newly available to the public. It is geared toward leaders in healthcare, business, government, academia, etc.

- Course focuses on ChatGPT, including practical applications, and its benefits and challenges. Course includes videos, slides, pre-class work and in-class activities. Note that this archived course was put together in 2024; the more recent versions of this class are paid.

- Course is also available as a YouTube playlist.

- See also other free courses on AI from Harvard: CS50’s Introduction to Artificial Intelligence with Python, CS50’s Computer Science for Business (top-down approach for non-technologists, emphasizes high-level concepts and design decisions).

Tags: Education, Overview, ELSI

Want a deeper dive into technical resources?

Visit our AI/ML Resources page for additional courses on python, statistics and other ways to learn more about AI/ML — often for free.

Have feedback on our content? Want to share a resource? Have an AI/ML anecdote to share from your field? Contact Us.

NSGC AI/ML Subcommittee The AI/ML Subcommittee is part of the National Society of Genetic Counselors’ (NSGC) Genomic Technologies Special Interest Group (SIG). We are dedicated to exploring and advancing the integration of AI and ML technologies to enhance the field of genetics and support the evolving role of genetic counselors.