AI is increasingly embedded in workflows, patient communications, information environments and even the scientific literature we rely on. Across this guest issue, a central tension emerges between capability and reliability. A researcher invented a fake disease and watched major AI chatbots confidently diagnose it; thousands of recent publications may contain AI-hallucinated citations that look convincingly real; and a structured safety evaluation of ChatGPT Health found it undertriaged more than half of emergencies. At the same time, work is underway to improve performance and integration, including:

- A proposed patient-clinician-AI triad framework for rare disease care

- Evidence that prompting strategies meaningfully influence the quality of AI clinical reasoning

- Emerging governance approaches designed to support safe and equitable use

Regulations around AI are also evolving rapidly, with federal efforts to establish a national AI policy framework, a new CDC AI strategy and a growing focus on the harder questions of accountability, transparency and responsibility in AI-enabled care.

Educational resources and tools this month include an interactive tool exploring AI’s potential impact on the genetic counselor role, practical guidance for supervising trainees navigating AI in their writing practice and recorded sessions on the ethical dimensions of health AI.

Guest Editor: Marlena Ahn, MGCS, CGC

Contributing Editors: Katya Orlova, MPH, CGC, PhD, KT Curry, MS, CGC, Ping Gong, MS, CGC, Lara Sucheston-Campbell, PhD, MS, Amy Lemke, PhD, MS

Subscribe to the AI/ML Email List for Updates

NEWS & OPINION

AI IN HEALTHCARE: REFOCUSING ON HUMAN CONNECTION

Jost, 2026

This opinion piece argues that as AI assumes an increasing share of the cognitive tasks traditionally defining medical expertise, the clinician's value shifts toward the human dimensions of care that AI cannot replicate — relational competencies like empathetic engagement and patient advocacy. The article raises a central question very relevant to genetic counseling: What does clinical value look like when AI handles more of the cognitive load?

"The ability to provide comfort in a state of uncertainty, interpret complex information with wisdom and nuance, guide patients through difficult decisions and extend the simple yet profound gift of human presence: These remain capabilities that not even the most sophisticated algorithms can provide.”

Tags: Medicine

REIMAGINING CARE OF PEOPLE LIVING WITH RARE DISEASES WITH ARTIFICIAL INTELLIGENCE

Groza et al., 2026

This article argues that AI's role in rare disease care should be organized along the patient journey — from early suspicion, through diagnosis and treatment development — and grounded in a three-way partnership between patient-family experts, clinicians and AI systems. In this "triad," patients and families contribute contextual knowledge, clinicians provide medical judgment and care coordination and AI integrates the multimodal and extensive data from the diagnostic odyssey. The authors emphasize bidirectional learning rather than one-way automation: AI systems can only improve if outcomes and lived experiences are systematically fed back into collective knowledge. For GCs in clinic, this framework maps directly onto existing practice — and the explicit centering of patient and family expertise alongside clinical and AI contributions reflects values that are foundational to genetic counseling.

“Taken together, the patient-clinician-AI triad provides a coherent framework for aligning technological possibilities with the realities of rare disease care. It clarifies where AI can add value — by integrating data and amplifying learning across cases — while underscoring that responsibility, trust and decision-making must remain shared.”

Tags: Medicine, Disease Prediction

SCIENTISTS INVENTED A FAKE DISEASE. AI TOLD PEOPLE IT WAS REAL

Stokel-Walker, 2026

A Swedish researcher invented a fictional eye condition called "bixonimania” — complete with fabricated preprints, a fictitious lead author, an AI-generated author photo, but also deliberate clues throughout (e.g., “Starfleet Academy” and “this entire paper is made up”) — to test whether AI chatbots would absorb and spread it as medical fact. Within weeks, ChatGPT, Google Gemini, Microsoft Copilot and Perplexity began to confidently present the condition as legitimate and advise users with symptoms to visit an ophthalmologist for further evaluation. This misinformation extended into the published medical literature where a peer-reviewed paper in Cureus cited the bixonimania research (and was subsequently retracted in March 2026). This experiment highlights how easily LLMs can propagate misinformation when content appears authoritative and serves as another reminder to critically evaluate AI-generated content — and support our patients in doing the same.

Tags: Medicine, Chatbot

HALLUCINATED CITATIONS ARE POLLUTING THE SCIENTIFIC LITERATURE. WHAT CAN BE DONE?

Naddaf & Quill, 2026

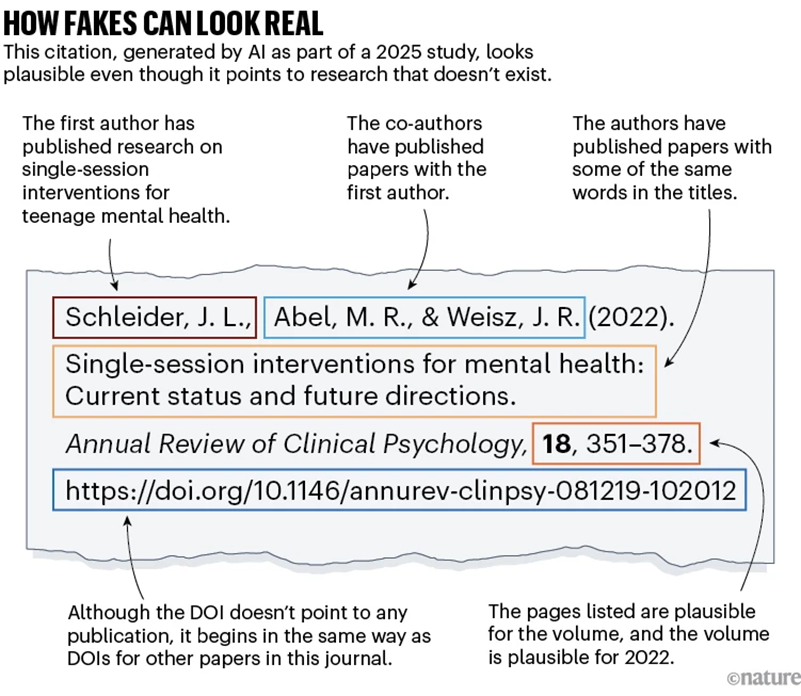

Tens of thousands of publications from 2025 may contain invalid references generated by AI, including journals, books and conference proceedings. The problem has grown more insidious over time: early hallucinated citations were obviously wrong, but newer ones — coined "Frankenstein" citations — pretty convincingly combine fragments of genuine publications and are tough to spot. AI also hallucinates DOIs, in both fabricated and genuine references. As researchers are increasingly using LLMs to help with literature searches, formatting and writing, this is an immediate practical concern.

Figure illustrating how AI-hallucinated citations combine real elements (e.g., plausible authors, volume numbers, and DOI formats) to produce references that are difficult to detect as fake.

Tags: Scientific Writing, Publishing

KEEPING HEALTH EQUITY AT THE FOREFRONT OF THE ARTIFICIAL INTELLIGENCE REVOLUTION IN MEDICINE AND HEALTH

Galea, 2026

This editorial argues that the promise of AI in health care cannot be realized without deliberate attention to equity at every stage of development and deployment. AI tools trained on nonrepresentative data carry biases forward and scale disparities rather than reduce them. Galea highlights the concern that AI may accelerate improvements for populations already well-served, while further marginalizing those facing the greatest barriers to care — including rural communities, individuals with lower socioeconomic status and minority groups.

“In sum, there is little question that the deployment of AI widely, much discussed and debated, holds potential to improve human efficiency and productivity while also embedding challenges and potential to exacerbate some existing problems… Lessons from prior technological adoption suggest that health equity does not emerge spontaneously from the introduction of these technologies but must be designed, governed, financed and monitored into AI systems at the outset.”

Tags: Public Health, Medicine, ELSI

SHADOW AI: A HIDDEN RISK TO HEALTHCARE

Wolters Kluwer Health, 2026

A December 2025 survey of over 500 US health care professionals found that 57% had encountered or used an unauthorized AI tool in their workplace — the top reason being for faster workflows. Burnout and administrative burden in health care is high, and when approved tools are unavailable or inadequate, clinicians improvise — highlighting a mismatch between the demand for AI-enabled workflows and the availability of sanctioned tools. The report highlights the governance risks this creates (patient safety, data privacy and regulatory compliance) and argues that health care organizations need to understand the resource gaps for their workers, identify tools to accomplish those goals safely and securely, and communicate guidelines for usage.

Tags: Public Health, Medicine, ELSI

The lock symbol means an article is available through open access.

The lock symbol means an article is available through open access.

RESEARCH

LIMITATIONS OF LARGE LANGUAGE MODELS IN CLINICAL DIAGNOSTIC REASONING

Tordjman & Mei, 2026

Tordjman & Mei, 2026

This commentary posed the question: “While the latest generation of reasoning models exhibits remarkable fluency and an apparent high-level logic, do these models meaningfully support medical clinical diagnostic reasoning, especially in complex, high-stakes settings?”

The commentary discussed Rao et al. and their evaluation of 21 LLMs with standardized clinical vignettes. They found that while models may arrive at the correct final diagnosis when given complete patient information for proper pattern recognition, they often failed at the earlier, reasoning-driven steps of the diagnostic process (e.g., generating appropriate differential diagnoses). These findings suggest that reliable AI-driven diagnosis has not yet arrived but hints at “a future of specialization” where “domain-specific medical models trained on curated clinical datasets integrating expert reasoning pathways may provide greater precision and safety than the current general-purpose LLMs.”

Perfect or complete information seldom precedes diagnosis, especially in certain specialties; therefore, the ability to navigate uncertainty through the often iterative and relationship-driven diagnostic odyssey remains critical to practice.

Tags: Overview, Medicine, Disease Prediction

PROTECTING CLINICAL VALUE JUDGMENT IN THE AGE OF AI

Patil et al., 2026

Patil et al., 2026

Who gets to decide what values are embedded in clinical tools, and how do clinicians maintain meaningful agency when those tools operate at scale? When a hospital configures an AI alert system to use a high-sensitivity threshold to improve detection rates, it is making a value judgment — one that will produce more alerts, but also more potential for alert fatigue and overdiagnosis. When another hospital sets a higher-specificity threshold to contain costs, it is making a different value judgment — one that upholds fiscal stewardship but may delay detection. Model developers make some value choices during model design, but a separate and underacknowledged set of value choices gets made at the institutional procurement and configuration stage. This commentary proposes a collaborative framework of federal transparency mandates for developers via Model Cards (structured documentation disclosing embedded choices) paired with internal multidisciplinary reviews to ensure alignment with an institution’s priorities and patient population.

“This joint effort — where regulators mandate the structure of transparency and multidisciplinary teams provide the substance of deliberation — can prevent AI systems from masking value trade-offs. In doing so, it preserves the clinical value judgment essential to patient-centered care.”

Tags: Medicine, ELSI

INCREASING LARGE LANGUAGE MODEL ACCURACY FOR CARE-SEEKING ADVICE USING PROMPTS REFLECTING HUMAN REASONING STRATEGIES IN THE REAL WORLD: VALIDATION STUDY

Kopka & Feufel, 2026

Kopka & Feufel, 2026

This validation study of 10 ChatGPT models for 45 real patient cases across urgency levels found that LLMs gave more accurate and clinically appropriate care-seeking advice when prompts (recognition-primed and data-frame prompting) encouraged them to mimic human decision-making — step-by-step thinking, acknowledging uncertainty and weighing multiple possibilities. AI can approximate clinical reasoning when guided well, but the quality of that approximation depends heavily on the human directing it: how you prompt matters.

Tags: Medicine, Chatbot

CHATGPT HEALTH PERFORMANCE IN A STRUCTURED TEST OF TRIAGE RECOMMENDATIONS

Ramaswamy et al., 2026

Ramaswamy et al., 2026

This structured safety evaluation of ChatGPT Health as a consumer tool found significant safety risks, particularly undertriage of emergency conditions (52% of “gold-standard” emergencies) and “inconsistent activation of crisis safeguards” in situations of suicidal ideation. This study calls for prospective validation of these tools, if not premarket safety evaluation requirements, before deployment and public use.

Tags: Medicine, Chatbot

HELP-SEEKING IN THE AGE OF AI: CROSS-SECTIONAL SURVEY OF THE USE AND PERCEPTIONS OF AI-BASED MENTAL HEALTH SUPPORT AMONG US ADULTS

Ueda et al., 2026

Ueda et al., 2026

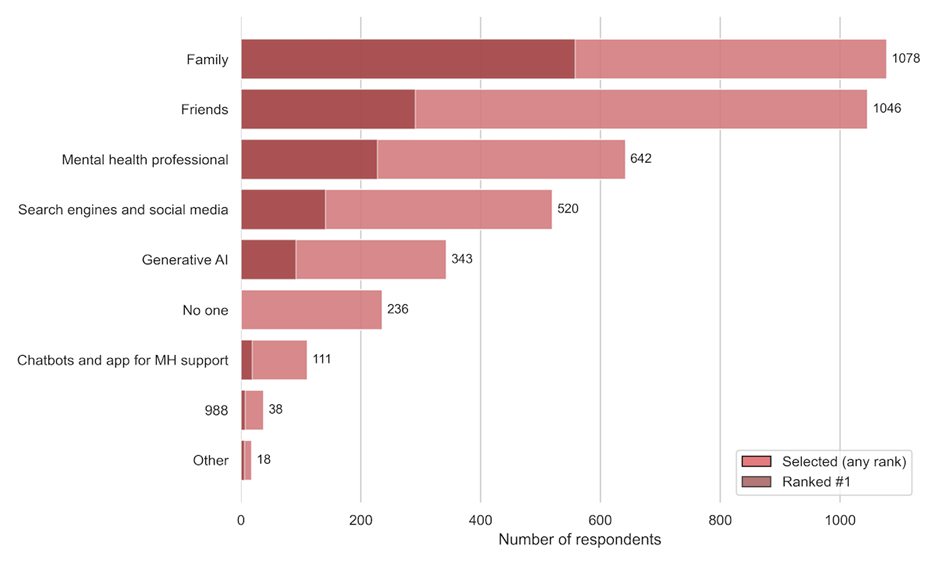

A survey of 1,805 US adults aged 18-49 found that about one-third reported using AI tools at least once a week for mental health support, with 5.5% described as “heavy users” regularly spending hours doing so. While family and friends remained the primary source of mental health support and most respondents still preferred human counselors over AI tools, roughly one in four AI users reported seeking human counseling less often since adopting AI tools — suggesting AI may be reshaping help-seeking patterns even if it hasn't replaced human support.

For GCs, the parallel is direct: patients navigating anxiety around genetic results or diagnoses may already be processing those experiences through AI tools — before or alongside clinical care.

Figure shows resources that respondents can turn to for mental health support.

Tags: Medicine, Chatbot

REGULATION, POLICY, GOVERNANCE

GUARDRAILS FOR GENAI DRAFTED REPLIES IN PATIENT PORTAL MESSAGING

Sakr, 2026

Sakr, 2026

This commentary argues that AI-drafted portal messages should be treated as a clinical workflow intervention, not a clerical convenience, and proposes five concrete governance principles for safe deployment: 1) Scope GenAI drafting to message types where failure is low consequence 2) Tier messages by risk and require “active attestation” for higher tiers 3) Preserve accountable human authorship in both fact and interface 4) Make drafts auditable and monitor failure modes continuously 5) Adopt patient-facing transparency that is truthful but non-disruptive

The commentary discussed a simulation study, Biro et al., which found that 13-15 out of 20 clinicians failed to address errors in AI-drafted replies, with 35-45% of the replies submitted entirely unedited. Errors included low-risk inaccuracies and potentially harmful omissions.

“From a governance perspective, this mirrors a familiar problem in other socio-technical domains: responsibility diffuses precisely where functional influence increases. Automation does not eliminate accountability; it redistributes it across interfaces, institutions, and workflows…In patient messaging, as elsewhere in medicine, the central ethical commitment is not to efficiency alone, but to ensuring that responsibility remains intelligible, traceable and owned.”

Tags: Medicine, ELSI

AI SAFETY IN HEALTH SYSTEMS: BUILDING INFRASTRUCTURE AND STRENGTHENING RISK MANAGEMENT PRACTICES

DukeHealth, 2026

DukeHealth, 2026

This white paper argues that ensuring safe AI use in health systems requires a shift from reactive to proactive, lifecycle-based risk management. It makes the case that health systems need formal governance structures with clear accountability, centralized inventories of AI tools in use, and integration of AI oversight into existing patient safety reporting systems. There are no widely adopted standards for AI safety in practice, regulatory oversight is fragmented and many health systems lack the technical expertise needed for effective monitoring. For GCs thinking about how their organizations or institutions should govern AI adoption, this is a substantive starting point.

Tag: Medicine

THE RIGHT TO UNDERSTAND IN HEALTH CARE AI

Ankolekar, 2026

Ankolekar, 2026

“What does a meaningful explanation of an AI medical decision look like in clinical practice, and how do we deliver it?” The European Union (EU) AI Act was established in August of 2024 with phases through 2027. It gives patients a new legal basis for demanding transparency about AI systems influencing their health care. This can be seen as a preview of where U.S. regulations may go. However, this open-access analysis examines what the new requirements actually involve and finds that the gap between legal compliance and genuine patient understanding is wide.

The paper identifies core barriers: 1) Interpretability tradeoff, where more accurate models may be less explainable and forcing transparency could sacrifice diagnostic accuracy, 2) Clinical implementation challenges, where a clinician may struggle to explain elements like AI confidence scores and may not have adequate time to do so, 3) Automation bias, where a clinician may be overly influenced by or rely upon an AI output and 4) Patient literacy barrier, where between 22% and 58% of EU citizens report difficulty understanding health information.

Solutions to these identified challenges include 1) Co-design, where developers design explanation systems with patient input and comprehension testing, 2) Health care institutions can allocate additional visit time for AI discussions and provide additional staff training and 3) Policy makers can develop standards on patient understanding and invest in digital health literacy education.

Tags: Public Health, ELSI

CDC RELEASES AI STRATEGY, GUIDANCE WITH EYE TOWARD 'AGENTIC' USES

Alder, 2026

Alder, 2026

The CDC released a formal five-year AI strategy in March 2026, with notable attention to agentic AI (systems capable of taking autonomous, multi-step actions rather than simply responding to queries). Alongside the strategy, the CDC released practical guidelines for state, tribal, local and territorial public health agencies on agentic research tools and generative AI.

Tag: Public Health

TRUMP SIGNS ORDER TO BLOCK STATES FROM ENFORCING OWN AI RULES

Hoskins & Jamali, 2025

A December 2025 executive order sought to preempt state-level AI regulations, including health care AI transparency laws already in place for some states. Since then, the White House released a full National Policy Framework in March 2026, calling for Congress to codify a federal framework that would preempt state AI laws that impose undue burdens, and the DOJ formally established an AI Litigation Task Force to challenge selected state laws in court. Even so, the legal landscape remains unsettled because state laws continue to operate unless and until they are invalidated, preempted through valid federal action or superseded by Congress.

Tag: Public Health

TOOLS & TIPS

HOW WILL AI IMPACT YOUR JOB?

Jobsdata.ai

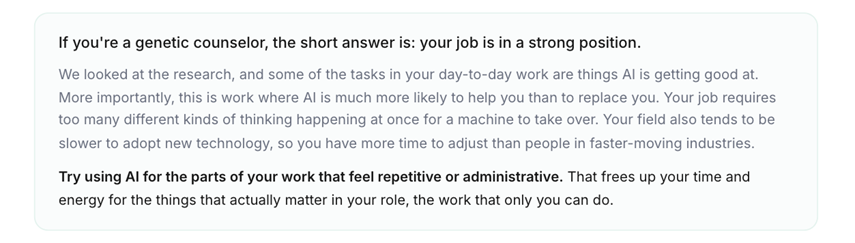

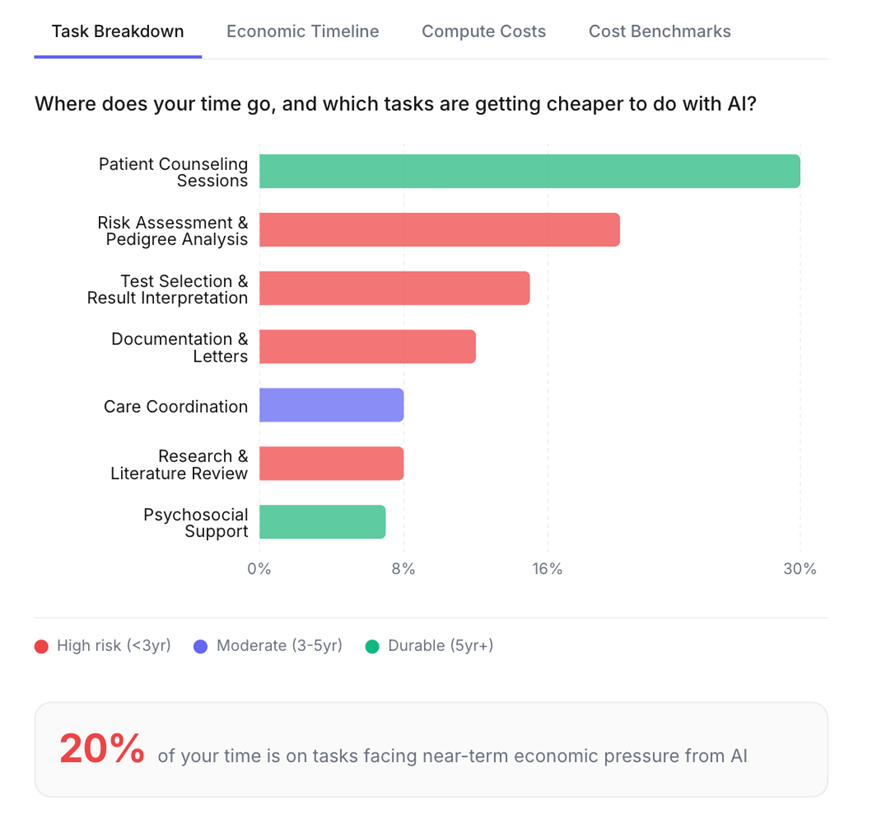

This free interactive tool breaks down how AI is predicted to affect specific tasks within hundreds of jobs — and genetic counselors were recently added. Users can explore which aspects of their work are considered most automatable versus most human-dependent, based on task-level analysis. The projections are one individual's theoretical model rather than an official forecast, so treat them as a tool for reflection rather than a definitive answer!

Figure above shows summary assessment of the genetic counselor role and risk of AI-driven economic displacement.

Figure above shows task breakdown for a clinical genetic counselor, illustrating how time is allocated and which tasks are most likely to experience cost pressure from AI, along with the expected timing of technological capability and adoption.

Tag: Genetic Counseling

CULTIVATING ACADEMIC WRITING COMPETENCE IN MEDICAL STUDENTS IN THE ERA OF ARTIFICIAL INTELLIGENCE

Ye, 2026

Ye, 2026

As AI tools become prevalent in academic writing, this paper proposes a practical collaborative model defining the distinct roles of students, mentors and AI across three phases of the writing process: problem definition, literature retrieval and synthesis, and drafting and iterative refinement. The model positions AI as an efficiency enhancer and reflection catalyst while preserving the mentor's role as thought guide and value judge — with the explicit goal of preventing over-reliance. Useful for GC programs supervisors, trainees or those navigating AI in their own writing practice.

Tags: Education, Scientific Writing, Publishing

TECH POLICY TRACKER

Tech Policy Press

A free, regularly updated tracker of laws, regulations, government investigations and litigation shaping accountability for technology companies — with AI laws well represented. Useful reference tool for GCs wanting to stay oriented in a fast-moving regulatory landscape.

Tag: ELSI

RESOURCES

BRIDGE2AI DISCUSSION FORUM ON EMERGING ELSI ISSUES

Bridge2AI

Tags: Education, Medicine, ELSI

AN INTRODUCTION TO AI FOR CLINICIANS: TUTORIAL

JMIR, 2026

- This open-access tutorial was written specifically for practicing clinicians encountering AI tools in their work without prior formal training in AI. It covers AI fundamentals, how common clinical AI tools work, and how to critically evaluate AI outputs.

Tags: Education, Overview

GOOGLE SKILLS

Google

- Google launched a free learning platform in late 2025 with 3,000+ AI and technology courses drawing on content from Google Cloud, DeepMind and Grow with Google.

- The platform spans all levels and is the curriculum used by Google’s own internal teams.

Tag: Education

Want a deeper dive into technical resources?

Visit our AI/ML Resources page for additional courses on Python, statistics and other ways to learn more about AI/ML — often for free.

Have feedback on our content? Want to share a resource? Have an AI/ML anecdote to share from your field? Contact Us

NSGC AI/ML Subcommittee The AI/ML Subcommittee is part of the National Society of Genetic Counselors’ (NSGC) Genomic Technologies Special Interest Group (SIG). We are dedicated to exploring and advancing the integration of AI and ML technologies to enhance the field of genetics and support the evolving role of genetic counselors.